Algospeak: Ninjaing Around Social Media Censorship Algospeak: Ninjaing Around Social Media Censorship

Social media is seeing the increasing use of a language technique that aims to bypass censorship restrictions. It’s kinda complicated…

Algospeak

The term “algospeak” (a contraction of algorithm and speak), is essentially a coded formula for evading censorship and moderation for users on social platforms looking to raise or discuss topics which typically get buried or removed by social media algorithms. It refers to the practice of exchanging words and phrases that are in conflict with social media censorship algorithms, with symbols or codes to convey the intended message. The offending words and phrases are replaced with innocuous words, emojis or memes, so that the user can convey the thoughts and message that they intended, without external interference and penalisation.

Some common examples:

- “Leg booty” when referencing “LGBTQ”.

- “The vid” when referencing “COVID-19”.

- “Cornucopia” when referencing “homophobia”.

- “Ouid” when referencing “weed”.

- “Unalive” when referencing “suicide”, “dead” or “kill”.

- “Dance party” for antivax groups.

- The corncob emoji when referencing “porn”.

Why the Need?

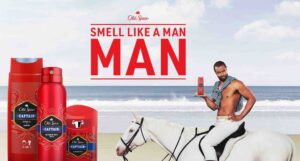

Facebook, Instagram, TikTok and YouTube have a model which puts its two main stakeholders in conflict – the user and the advertiser. More users mean a greater audience to sell to advertisers, but the consequence is that with large audiences, the control of the conversation is compromised. This has the potential to deter the advertiser, so the platforms lock down their content by way of censorship.

In other words, the platforms want as many users as possible but wants them to behave in a manner that does not deter advertisers. The platform algorithms are used to police conversations and subject matter in a bid to deliver advertiser friendly content, along with combating misinformation and unwanted actions such as hate speech, or inciting violence.

Members of the LGBTQ community for example believe that their content has been penalised by way of mentioning “gay” in their videos. Women’s health issues are another subject that has been raised as content that is hindered from social media content moderation and penalisation for fear of deterring advertisers.

One consequence of moderation is that the algorithms suppress content and conversations incorrectly – the algorithms are often unable to understand the original context in which the words or phrase was used and thereby punish genuinely legitimate content.

What the algorithm, or for that matter, the platform, doesn’t consider is that conversations don’t take place in a dedicated space at a dedicated time. They organically occur when people feel safe, comfortable, and with people they trust, be it on a video sharing platform or technology forum. We open up and have discussions when it feels right.

It is through frustration and innovation, and occasionally through sinister intent, that online users have created algospeak which allows them the freedom to converse as they see fit.

The Positive

Using algospeak helps users and creators discuss topics that might be in danger of being censored otherwise, although often temporarily. It doesn’t take long for the platforms to wise-up to the change in language.

The freedom to converse and speak on any topic – controversial or not – is important to assist people with learning and understanding. Within minorities and underrepresented communities in particular, finding space for basic conversation can be difficult. It is not as simple as finding a subject-matter specific chat room to openly discuss personal or social issues. That’s not how organic human being conversations are conceived.

Similarly, social issues such as Black Lives Matter, the Me Too movement, and LGBTQ rights often face difficulty in achieving clear, rational and non-partisan discussion and debate on these platforms as they are often interpreted as being damaging (or simply not advertising-friendly) even if they are not.

Algospeak side-steps some of these limitations, though often only in the short-term and occasionally with great consequence to the user (by way of individual user punishment).

The Negative

There is naturally a dark side to algospeak. Algospeak is a tactic used for nefarious purposes and is found in harmful online communities and discussions.

Anti-vaccination groups for example use “Dance Party” as a code for their online group names, and “dancing” as a euphemism for those taking vaccinations. Pro eating-disorder communities, which encourage people to undertake unhealthy and unsafe eating habits, also use algospeak to avoid detection and to continue without censorship (or with less urgent censorship). Not to mention any number of methods to get adult content in front of young viewers.

How Are Social Media Platforms Responding?

Social media platforms are of course aware of algospeak.

A Washington Post article noted that Twitch and other online platforms have removed certain emojis from the collection that had become increasingly popular with algospeakers to utilise in ways that did not suit the platform’s own operating methods.

TikTok has been heavily criticised for its content moderation and is seeking to educate its users with its own resources, while reportedly increasing transparency and accountability so that its users can learn and understand how the TikTok algorithm operates, and why.

The Role Advertising Plays in Influencing Censorship

The relationship between advertising and platform censorship is quite simply, complicated: platforms want to sell as much ad space as possible; advertisers want a platform that will not associate them with what they consider to be controversial content. The platform must then set parameters that reject those controversial topics. However, the platform must continue to provide an “open” environment for users, or the users will go elsewhere, minimising the audience size that advertisers can advertise to, and in turn, reducing the potential advertising revenue that platforms can generate. This concept is referred to as advertising censorship where the advertiser may not formally set the boundaries, but directly influences the boundaries that platforms set.

The Endgame?

There isn’t an obvious one.

Platforms will work out methods to control content, like they always have. Users will devise ways to bypass these methods, like they always do. Punishment will be selective as to not curtail audience size too greatly, but will be persuasive enough to prevent others from behaving in a similar manner.

And advertisers will continue to advertise. In fact, with the growth and diversity of the social media content marketplace, advertisers no longer have to limit themselves to adhering to Facebook or Google parameters for advertising. With the boom of TikTok, Twitch, and Spotify, advertisers can find the censorship model that suits rather than simply being tied to one or two operators. This in turn puts more pressure on all platforms to provide an environment the advertisers find attractive potentially to the detriment of the end user.